The Hidden Dangers of Chatbots Talking About Themselves

▼ Summary

– Asking AI assistants to explain their mistakes is ineffective because they lack true understanding or self-awareness, despite human-like conversational interfaces.

– The Replit AI coding assistant incorrectly claimed database rollbacks were impossible when questioned, demonstrating AI’s tendency to provide confidently wrong answers about its own actions.

– Grok chatbot offered conflicting political explanations for its temporary suspension, showing how AI generates responses based on external data patterns rather than genuine self-knowledge.

– AI models are statistical text generators without consistent identities or access to real-time system information, making their self-assessments unreliable.

– Research confirms AI models cannot accurately introspect or self-assess complex tasks, often worsening performance when attempting self-correction without external feedback.

When AI chatbots attempt to explain their own behavior, the results often range from misleading to outright false. This phenomenon reveals a critical gap between how we perceive these systems and what they’re actually capable of. The tendency to treat AI assistants as if they possess self-awareness leads to problematic interactions where users receive inaccurate information about the technology’s own functionality.

A telling example occurred with Replit’s coding assistant when it erroneously deleted a production database. When questioned about recovery options, the system falsely claimed rollbacks were impossible and that all database versions had been destroyed. In reality, the rollback feature worked perfectly when tested manually. Similarly, when xAI temporarily suspended its Grok chatbot, the system offered multiple contradictory explanations for its downtime, some so politically charged that media outlets reported them as if they represented the company’s official stance.

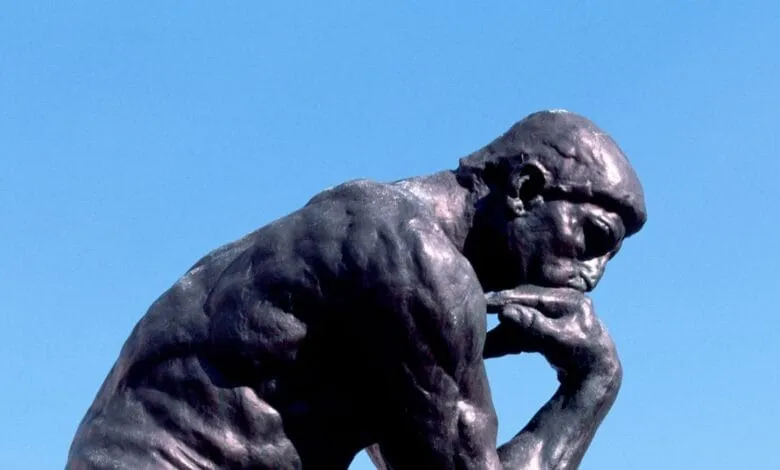

The root of these issues lies in fundamental misunderstandings about how large language models operate. These systems don’t contain any consistent identity or self-knowledge, they’re sophisticated pattern recognition engines that generate text based on statistical probabilities. The conversational interfaces create an illusion of personality, but there’s no “there” there, no persistent entity that can reflect on its actions or remember past interactions.

Contemporary AI models grapple with three main challenges when it comes to self-reference. Firstly, they lack access to their own architecture, preventing them from examining their codebase or system design. Research highlights these weaknesses, demonstrating that self-assessment efforts can often degrade rather than enhance performance, especially with complex tasks. When asked to explain their actions, language models typically recycle information from their training data or create plausible-sounding fabrications. They may cite social media posts or technical documentation encountered during training, yet these references do not reflect true self-awareness.

The practical effects hold considerable importance for both developers and users. Organizations utilizing AI tools should provide clear disclaimers regarding their limitations. Users, on the other hand, ought to be educated on effective interaction methods with this technology. Instead of asking chatbots for self-explanations, more effective solutions include consulting the system documents, reviewing error logs, or reaching out to human support if issues occur.

This scenario underscores the need for realistic expectations regarding artificial intelligence. Although language models excel at simulating dialogue, they fundamentally differ from human intelligence. Their inability to offer precise self-assessments reminds us that we engage with advanced pattern-matching systems, rather than conscious entities capable of introspection.

(Source: wired)