Google Gemini Now Generates Nano Banana Images

▼ Summary

– Google has integrated its Nano Banana image generation models into Gemini’s Personal Intelligence feature, allowing it to create images using a user’s personal data from apps like Gmail, Photos, and Calendar.

– The feature is initially launching for Plus, Pro, and Ultra subscribers in the United States, with a planned broader rollout that notably excludes Europe.

– Nano Banana is Google’s native family of conversational image generators for Gemini, with three distinct versions built on different Gemini models for varying capabilities and speeds.

– This integration leverages Google’s vast ecosystem of user data to create a personalized image generation service that competitors lack the data breadth to easily replicate.

– The rollout raises privacy considerations, as the feature is opt-in and includes a transparency tool, but Europe’s exclusion indicates potential regulatory concerns.

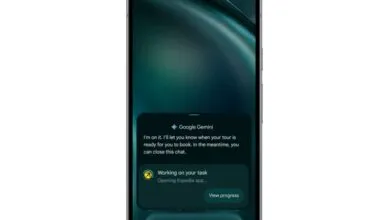

Google is now integrating its Nano Banana image generation models directly into the Gemini Personal Intelligence framework. This significant update allows the AI to produce images that are informed by a user’s personal data from connected Google services like Gmail, Photos, Calendar, and Drive. The move transforms Gemini from a tool that responds to typed prompts into one that can create visuals based on who you are and what you do. The feature is launching first for paying subscribers in the United States, with a broader rollout planned, though European users are excluded from this initial phase.

This capability is powered by Nano Banana, Google’s native family of image models built specifically for the Gemini ecosystem. Unlike the company’s standalone Imagen models, which are designed for high-quality, iterative professional work, Nano Banana is optimized for conversational use within Gemini. It can accept both text and images as inputs. The family has grown to include three versions: the original model for basic tasks, the faster Nano Banana 2, and the advanced Nano Banana Pro. This top-tier version leverages the full reasoning power of the Gemini 3 Pro model, aiming to generate images that reflect a deeper comprehension of a request rather than simple pattern matching.

The technical premise is that by being natively integrated, Nano Banana can utilize Gemini’s sophisticated language understanding to interpret the nuance and intent behind a prompt more effectively than a separate, attached image generator. The model can reason about the request, drawing context from the ongoing conversation and, crucially now, from a user’s private information.

This marks a major expansion for Personal Intelligence, the framework Google introduced earlier this year that links Gemini to a user’s Google account. Until now, this connection primarily personalized text-based responses, letting Gemini answer questions about travel plans by scanning your emails or make suggestions based on your history. With image generation added, the personalization becomes visual. Potential uses include creating images that incorporate elements from your personal photo library or generating visuals that reflect your specific tastes and life circumstances. A key feature is a “sources” button that shows users which pieces of their personal data informed a generated image, providing a layer of transparency for AI content.

Google’s approach leverages a unique competitive advantage: its unparalleled repository of user data across market-leading services. By connecting a capable image generator to this vast, cross-platform dataset encompassing email, photos, search, and maps, Google creates a personalization moat that rivals like OpenAI or Apple cannot easily match without a similar ecosystem. This strategic integration arrives as competitors push their own AI features, with ChatGPT driving engagement through image generation and Apple focusing on on-device intelligence.

The announcement also notes that on-device image generation via Gemini Nano is coming to Pixel and Android devices, promising private, instant creation without needing the cloud. This dual approach, combining powerful cloud-based personalized generation with fast, local processing, aims to cover a wide spectrum of user needs.

Inevitably, this deep integration raises privacy questions. While Google states it does not train its models on personal data and the feature is strictly opt-in, the system must process that sensitive information to generate contextually relevant images. The distinction between using data for inference versus training is technically important but may not comfort all users. The exclusion of Europe from the launch suggests Google anticipates regulatory scrutiny under the GDPR and AI Act, continuing a pattern of delayed feature rollouts in that region due to stricter data protection standards.

For subscribers who enable it, the value is an AI assistant capable of producing images uniquely relevant to their lives. For others, the trade-off between profound personalization and granting broad data access remains a fundamental consideration, one that has long defined the relationship with Google’s services. This feature represents the latest, and most visually creative, iteration of that enduring bargain. Google has not announced any new fees, indicating the capability is included within existing Gemini Advanced subscription tiers, with access prioritized for higher-paying users.

(Source: The Next Web)