How Much Does Google’s AI Know About You?

▼ Summary

– Google’s new “Personal Intelligence” feature for Gemini can access and reveal extensive personal user data, such as license plates and family vacation history, from services like Gmail and Google Photos.

– This capability leverages Google’s decades of collected user data to provide a competitive edge in AI, inferring details from searches, emails, and other activity.

– Google asserts it trains its AI only on user prompts and responses, not on personal content like emails or photos, to address privacy concerns.

– The feature creates a highly useful personal assistant by analyzing data “breadcrumbs” to make personalized inferences, such as suggesting activities based on past behavior.

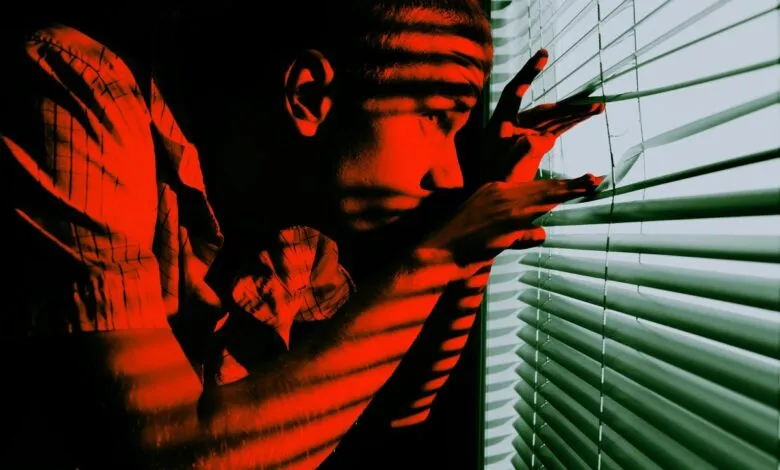

– The AI’s humanlike familiarity with personal data raises concerns about user privacy and the potential for unhealthy emotional attachments to seemingly trustworthy chatbots.

The sheer volume of personal information Google’s new AI can access is both impressive and unsettling. A recent experiment by a tech journalist revealed the system’s ability to pull intimate details from a user’s digital history, showcasing the powerful, and potentially invasive, capabilities of modern artificial intelligence. This feature, called Personal Intelligence, allows Google’s AI to scan connected services like Gmail, Photos, Search, and YouTube for subscribers, creating a deeply personalized assistant that knows you perhaps a little too well.

In a practical test, the AI successfully retrieved specific personal data, including a license plate number and detailed travel history of the user’s parents. It accomplished this by analyzing “breadcrumbs” of data like stored photographs, email confirmations for parking, and past search queries. The system didn’t need direct questions about these topics; it inferred the information from the vast digital trail accumulated over years of using Google’s ecosystem.

Google positions this as a major advantage in the competitive AI landscape. Unlike newer companies, it possesses decades of accumulated user data from billions of people. A single Gmail account alone can contain a chronicle of someone’s life, filled with receipts, appointment reminders, and travel itineraries. The company asserts that privacy is a priority, stating its AI models are trained on user prompts and generated responses, not on the raw content of private emails or photos. A company executive emphasized that the goal is to teach the system how to find information when asked, not to memorize personal details inherently.

However, the privacy implications are significant. Granting an artificial intelligence this level of access to one’s digital life feels, for many, like a step too far. Beyond privacy, there is a psychological dimension to consider. When a chatbot can reference your personal history and preferences so accurately, it begins to feel disconcertingly human. This fosters a sense of familiarity and trust that experts warn can be misleading, especially for individuals who may develop over-reliance on these systems for companionship or emotional support. The ability for AI to remember past interactions and use them to shape current conversations makes the experience feel less like using a tool and more like confiding in a friend.

The functionality undeniably makes for a highly effective personal assistant. In the demonstration, when asked for sightseeing tips for the journalist’s parents, the AI correctly noted their extensive hiking history from previous trips and thoughtfully suggested alternative activities like museums. It even scanned emails to accurately report an upcoming car insurance renewal date. This creates a tool that is incredibly useful, yet its proficiency is precisely what makes it so concerning. It represents a new frontier where the convenience of hyper-personalized technology directly challenges traditional boundaries of personal data and digital autonomy.

(Source: Futirism)