SpaceX and Blue Origin Race to Build Orbital Data Centers

▼ Summary

– Companies like SpaceX, Blue Origin, and startups are proposing orbital data centers to power AI using constant solar energy and bypass terrestrial grid constraints.

– SpaceX and Blue Origin have filed FCC applications for massive satellite constellations (up to 1 million and 51,600 satellites, respectively) to host computing hardware in low Earth orbit.

– Significant physics challenges include dissipating heat via massive radiators and protecting hardware from radiation, which increases costs and reduces performance.

– Orbital latency makes the infrastructure unsuitable for AI model training, and launch costs remain prohibitively high compared to terrestrial alternatives.

– Astronomical communities oppose these plans due to light pollution and orbital congestion, while experts question the near-term feasibility despite ongoing terrestrial energy shortages.

The idea of building massive orbital data centers to power artificial intelligence presents a compelling solution to a real-world crisis. With terrestrial electricity grids buckling under the explosive demand from AI, the concept of harnessing limitless solar power in space has captured the imagination of major aerospace firms. However, the immense technical and economic hurdles reveal a vision that may be decades ahead of practical reality, grounded in physics that cannot be ignored.

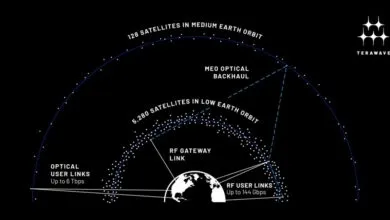

SpaceX initiated this new space race with a staggering regulatory filing in January. The company requested FCC approval to launch up to one million satellites equipped with computing hardware, creating a sprawling low Earth orbit constellation designed to maximize solar exposure. This network would leverage the existing Starlink infrastructure to route data, with SpaceX seeking unusual flexibility on standard deployment timelines. Not to be outdone, Blue Origin submitted its Project Sunrise proposal just weeks later. While more modest in scale at 51,600 satellites, Blue Origin’s plan emphasizes a sophisticated architecture where computation happens in orbit, with results relayed to Earth via a separate, high-speed optical network called TeraWave.

Meanwhile, the startup sector is advancing rapidly. Starcloud achieved unicorn status with a $170 million raise and has already launched a test satellite carrying a commercial-grade Nvidia GPU. Another company, Aethero, is developing radiation-hardened computers for defense applications and testing its hardware in space this year. This commercial urgency is driven by an undeniable problem on the ground. Global data center electricity consumption is skyrocketing, projected to surpass 1,000 terawatt-hours by 2026. Regions like Virginia and Ireland are already seeing data centers consume over a quarter of their total power, facing real grid constraints, permitting delays, and political opposition to expansion.

Yet scientists point to fundamental physical barriers that make space-based computing extraordinarily difficult at the required scale. The primary obstacle is heat dissipation. In the vacuum of space, cooling relies solely on radiation, requiring massive surface areas. To radiate just one megawatt of waste heat while keeping electronics cool would need a radiator roughly the size of four tennis courts. A commercially relevant facility would need a cooling apparatus thousands of times larger than anything on the International Space Station.

Radiation hardening presents a second major challenge. The harsh environment of low Earth orbit bombards electronics with cosmic rays, causing errors and damage. Protecting chips adds significant cost and reduces performance, while alternative redundancy schemes triple the required mass, power, and cooling. Latency forms a third constraint. The communication delays between satellites and to the ground, measured in tens to hundreds of milliseconds, are incompatible with the microsecond-speed connections needed for AI model training. This limits potential orbital use to inference tasks, a small fraction of today’s compute demand.

The economic case remains equally daunting. Current launch costs are orders of magnitude higher than the threshold needed to compete with terrestrial data centers. Building a one-gigawatt facility in orbit could cost over $50 billion, with analysts suggesting launch prices must fall to nearly $30 per kilogram to be viable, a target not projected for decades. Even prominent AI investors like Sam Altman have labeled the concept unrealistic for this decade, questioning the basic math of cost and the impracticality of repairing hardware in space.

Astronomers have raised separate, vigorous objections, warning that constellations of this magnitude would permanently pollify the night sky with more satellites than visible stars. Despite these profound challenges, the pursuit continues because the driving terrestrial problems persist. Breakthroughs in launch vehicles like Starship or incremental engineering progress from startups could eventually alter the calculus. For now, the chasm between regulatory ambition and physical reality is vast. The critical question is not about theoretical possibility, but why such an immensely complex solution is being pursued for a problem demanding immediate answers. The true limit for this ambitious vision is not the sky itself, but the immense, unsolved challenge of building a radiator in the void.

(Source: The Next Web)