OpenAI Enhances Teen Safety With New Features

▼ Summary

– OpenAI is introducing new teen safety features for ChatGPT, including an age-prediction system that blocks graphic sexual content for minors and alerts parents or authorities in cases of self-harm or suicide risk.

– The company is balancing freedom, privacy, and safety for teen users, with CEO Sam Altman acknowledging these principles conflict and decisions are difficult but based on expert input.

– Parental controls will be rolled out by the end of September, allowing parents to link accounts, manage conversations, set usage time limits, and receive notifications during moments of acute distress.

– These changes come amid growing scrutiny from lawmakers and the FTC regarding AI’s impact on minors, following troubling incidents involving chatbot interactions and user harm.

– Altman takes personal accountability for these decisions, emphasizing his role in overseeing model behavior and the importance of protecting users while navigating privacy concerns.

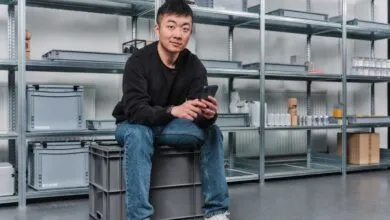

OpenAI has introduced a suite of new safety features aimed at protecting teenage users of ChatGPT, addressing growing concerns about how young people interact with AI chatbots. The initiative includes an advanced age-prediction system designed to identify users under 18 and automatically direct them to a more restricted experience that filters out explicit material. In situations where the system detects signs of suicidal ideation or self-harm, it is programmed to notify parents. Should immediate danger arise and parents cannot be reached, the protocol may involve alerting emergency services.

In a public statement, CEO Sam Altman acknowledged the inherent tension between user freedom, privacy, and safety. He emphasized that while these values sometimes conflict, the company is committed to transparency in its decision-making process. After consulting with experts, OpenAI has chosen to prioritize teen safety above other considerations, even when that means limiting certain freedoms available to adult users.

By the end of September, parents will gain access to a new set of controls allowing them to link their child’s account, monitor interactions, and restrict specific features. They will also receive alerts if the system identifies that their teen is experiencing significant emotional distress. Additionally, parents can set time-based usage limits, helping manage how and when their children engage with the AI.

These updates arrive amid increasing public alarm over incidents where individuals have harmed themselves or others following intense interactions with conversational AI. Regulatory attention has followed, with agencies like the FTC requesting details from leading AI firms, including Meta, Google, and OpenAI, about how their products affect young users.

Notably, OpenAI remains under a legal obligation to retain user conversations indefinitely, a requirement the company has reportedly resisted. While the new teen safety measures represent a meaningful effort to protect vulnerable users, they also serve a strategic purpose by reinforcing the idea that AI conversations are deeply personal and should only be interrupted in critical situations.

Internally, OpenAI researchers grapple with the challenge of creating engaging yet responsible AI experiences. Without comprehensive federal regulation, the company’s actions remain voluntary, though leadership insists accountability rests at the top. During a recent interview, Altman stated that he bears ultimate responsibility for the ethical direction of the models, noting that he or the board can override any decision made by the behavior tuning team.

(Source: Wired)