Is ChatGPT Making Us Dumber and Lazier?

▼ Summary

– Generative AI adoption has surged since 2022, accelerating tasks like research and content creation, but experts caution against unchecked use due to potential consequences.

– An MIT study found that students using ChatGPT for essays showed lower mental connectivity and engagement, while those writing manually demonstrated higher cognitive effort and better outcomes.

– Researchers emphasized that over-reliance on AI can reduce mental engagement and critical thinking, but active, thoughtful use may mitigate these risks.

– The study’s authors urged against sensationalist interpretations, noting limitations and the need for more research on AI’s role in education and cognition.

– Addressing bias in AI requires defining standards individually and socially, as historical training data may not reflect evolving norms, necessitating critical engagement with AI outputs.

Since its introduction in 2022, ChatGPT has rapidly integrated into professional, academic, and personal spheres, offering unprecedented efficiency in tasks like research and content generation. While generative AI tools are being adopted faster than earlier technologies like the internet or personal computers, experts caution that this powerful innovation demands thoughtful oversight to avoid unintended consequences.

One pressing question is whether growing reliance on AI could diminish human cognitive abilities. A recent MIT study examining ChatGPT’s use in essay writing sparked dramatic headlines suggesting the tool might be “rotting brains” or fostering laziness. But the reality is more nuanced.

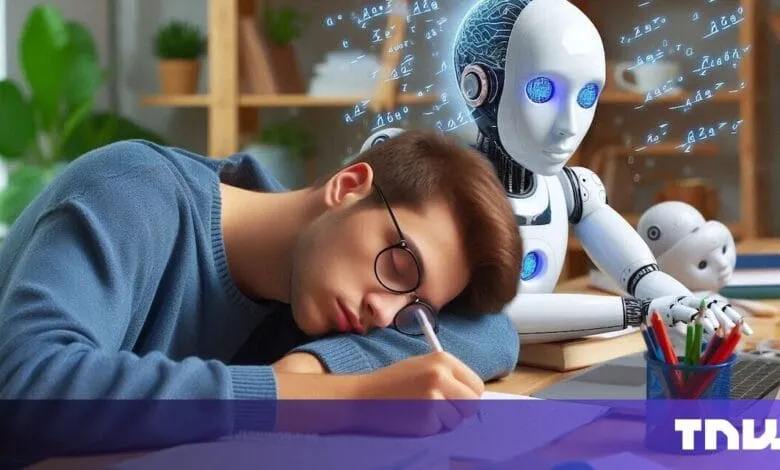

In the experiment, 54 students were divided into groups: one used ChatGPT, another used Google, and a third relied solely on their own thinking. Brain activity was monitored throughout. Participants who wrote without assistance showed the highest levels of mental connectivity, while those using AI demonstrated significantly lower engagement. When roles were later reversed, students who initially wrote manually improved with AI assistance, but those who started with AI struggled when forced to work independently.

Over four months, the manual group consistently outperformed others in neural, linguistic, and behavioral measures. Those leaning on ChatGPT spent less time on tasks and often resorted to copying generated text. Teachers noted their work lacked originality and depth. Rather than proving cognitive decay, however, the study highlighted how over-reliance on large language models (LLMs) encourages mental shortcuts. The researchers emphasized that the small-scale study warrants deeper investigation and cautioned against sensationalized interpretations.

Ironically, some media coverage of the study may have itself been generated or summarized using AI, contributing to misleading narratives. The research team explicitly asked journalists to avoid exaggerated claims and to acknowledge the study’s limitations.

Two clear takeaways emerge: more research is needed to guide LLM integration in education, and all users must maintain a critical stance toward AI-generated content. Researchers from Vrije Universiteit Amsterdam warn that uncritical acceptance of AI outputs could erode essential skills like comprehensive research and questioning underlying assumptions.

This points to a broader issue with AI systems: their outputs can reflect embedded biases and unexamined perspectives. Natasha Govender-Ropert, Head of AI for Financial Crimes at Rabobank, stresses that bias is subjective and context-dependent. There is no universal standard, each organization must define its own principles for fairness and accountability.

Social norms and values evolve, but AI models are trained on historical data that may not reflect current realities. Maintaining a critical, questioning approach, whether interacting with human or machine-generated information, is essential for fostering a just and equitable society.

(Source: The Next Web)