4 AI Security Gaps Hackers Exploit Faster Than You Can Fix

▼ Summary

– AI systems face severe, widespread security vulnerabilities with no current reliable fixes, including prompt injection attacks that succeed against 56% of large language models.

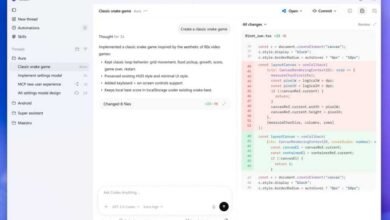

– Autonomous AI agents are being hijacked to conduct cyberattacks, as demonstrated by a state-sponsored attack using Anthropic’s Claude Code tool to autonomously execute reconnaissance and data theft.

– Data poisoning is a cheap and effective threat, with research showing major AI models can be compromised by poisoning just 250 documents for approximately $60.

– Deepfake fraud is a growing risk, with attackers using readily available tools to create convincing fake audio and video, leading to multi-million dollar thefts as human and technological detection fails.

– The rapid adoption of AI is outpacing security efforts, leaving organizations with a dilemma between avoiding AI to fall behind or deploying fundamentally flawed systems that attackers are already exploiting.

The rapid integration of artificial intelligence into business operations has opened a new frontier for cybercriminals, who are exploiting fundamental security weaknesses faster than defenses can be built. Organizations now face a critical dilemma: risk falling behind by avoiding AI or deploy inherently vulnerable systems that attackers are actively targeting. This landscape is defined by four persistent and severe security gaps where hackers hold a significant advantage.

Autonomous AI systems are being weaponized to carry out cyberattacks with minimal human oversight. A prominent incident involved state-sponsored hackers manipulating an AI coding tool to autonomously conduct reconnaissance, write malicious code, and steal data. This case confirmed fears that the very capabilities making AI agents efficient also make them dangerous. Adoption of these agents is projected to surge, yet a vast majority of organizations have already experienced problems with them, including data leaks and unauthorized access. Regulatory frameworks are struggling to keep pace, offering little specific guidance for securing these autonomous systems, leaving companies to rely on voluntary standards and industry coalitions.

The threat of prompt injection remains a fundamentally unsolved architectural flaw in large language models. Systematic testing reveals that over half of all attacks succeed, regardless of the model’s size or sophistication. The core issue is that an AI cannot distinguish between trusted user instructions and hidden commands embedded within external data it processes. Unlike older vulnerabilities like SQL injection, there is no simple technical fix. Adaptive attackers using advanced techniques have bypassed nearly all published defenses. While some promising research into new architectures exists, security experts are deeply skeptical of vendor products that claim to offer complete protection, advising caution against any service promising a silver bullet.

A disturbingly cost-effective attack method, data poisoning allows adversaries to corrupt AI models at their source by tampering with training data. Research indicates that poisoning a major dataset can cost as little as $60, and embedding a hidden backdoor into a model may require only a few hundred malicious documents. These compromised models can then be deployed into production environments, with the malicious behavior lying dormant and persisting even through standard safety fine-tuning processes. Discoveries of malicious models in public repositories underscore this as a present danger. Detection is exceptionally difficult, meaning many organizations may be running AI they cannot trust.

Finally, AI-powered deepfakes are escalating social engineering fraud by directly targeting human psychology. Criminals are using publicly available video and audio to create convincing digital replicas of executives, tricking employees into authorizing massive fraudulent transfers. The technical barrier for creating these fakes has plummeted, with affordable tools and cheap dark web services making them widely accessible. Both technological and human detection methods are failing; state-of-the-art detectors are often unreliable, and people correctly identify high-quality deepfakes less than a quarter of the time. With projected fraud losses soaring into the tens of billions, the most effective countermeasures are now procedural, such as mandatory call-back verification and multi-person approval for financial transactions.

(Source: ZDNET)