iOS 26.4: Siri’s Upgrade Is Bigger Than Apple Promised

▼ Summary

– Apple’s iOS 26.4 update will introduce a new Siri version with a core architecture built around a large language model (LLM) for improved understanding and reasoning.

– This LLM upgrade will enable Siri to handle personal context, onscreen awareness, and deeper app integration for multi-step tasks, unlike the current system.

– The revamp resulted from Apple abandoning a failed hybrid system and now using a custom AI model developed in collaboration with Google’s Gemini team.

– The update, delayed from iOS 18, follows internal restructuring of Apple’s AI team and aims to deliver a more capable assistant, though it will not function as a full chatbot.

– Apple plans a further upgrade in iOS 27 to transform Siri into a full chatbot capable of back-and-forth conversation like competing AI models.

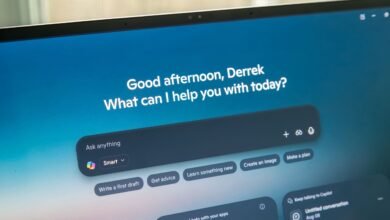

The upcoming iOS 26.4 update is set to deliver a transformative upgrade to Siri, fundamentally changing how users interact with Apple’s digital assistant. This spring release will introduce a version of Siri rebuilt from its core, leveraging advanced large language model technology to provide a smarter, more intuitive, and deeply integrated experience. While it won’t function as a full chatbot, the improvements promise to address long-standing limitations and finally bring Siri into the modern era of AI assistants.

The next-generation Siri will be constructed around a large language model (LLM) core, a foundational shift from its current architecture. Presently, Siri operates using multiple, separate machine learning models to handle specific tasks in a step-by-step sequence. It must identify a request’s intent, extract relevant data, and then call upon various applications or APIs to fulfill it. This fragmented approach lacks the reasoning power and contextual understanding that modern LLMs provide. The new system will allow Siri to genuinely comprehend the nuances of a user’s query and apply logical reasoning to execute tasks, moving beyond simple voice-to-text translation and keyword spotting.

This architectural overhaul is designed to solve Siri’s most persistent shortcomings. Currently, the assistant is reliable for basic commands like setting timers or sending pre-written texts. However, it struggles with multi-step requests, fails to interpret unconventional phrasing, lacks personal context, and cannot handle follow-up questions. By integrating an LLM as its central “brain,” Siri will gain the ability to understand complex instructions, infer user intent, and manage actions that span across different applications and pieces of information.

Apple initially outlined these ambitions under the Apple Intelligence banner during the iOS 18 unveiling. The promised enhancements focus on three key areas: personal context, onscreen awareness, and deeper app integration. With personal context, Siri will learn from your emails, messages, files, and photos to answer questions like “Show me the files Eric sent me last week” or “What’s my passport number?” Onscreen awareness will let Siri see and act upon content displayed on your device, such as adding a texted address directly to a contact card. Deeper app integration will enable cross-application workflows, for example, editing a photo and then instructing Siri to send it to a specific person.

Interestingly, the scope of the iOS 26.4 update may exceed Apple’s original promises. During an internal meeting in August 2025, software chief Craig Federighi explained that earlier attempts to merge Siri’s legacy system with a new LLM-based system had failed. The hybrid approach was untenable, forcing a complete architectural rebuild. Federighi stated this comprehensive revamp put Apple in a position “to deliver a much bigger upgrade” than what was initially announced at WWDC 2024.

A significant factor in this development shift was Apple’s decision to partner with Google. After acknowledging its in-house AI models couldn’t match competitors, Apple entered a multi-year partnership to utilize a custom model built with Google’s Gemini team. For the foreseeable future, Siri and other Apple Intelligence features will be powered by this collaboration, though Apple continues developing its own models. The company plans to maintain its privacy stance by processing some features on-device and using Private Cloud Compute, keeping personal data secure and anonymized.

It’s important to note what this update will not include. Siri in iOS 26.4 will not be a chatbot; it will not possess long-term memory or support extended, conversational dialogues. The primary interface will remain voice-based with limited text input. The full chatbot experience is reportedly slated for a subsequent iOS 27 update.

The path to this release has been rocky. Apple’s high-profile delay of the smarter Siri, originally promised for an iOS 18 update, became a public relations challenge. The company later admitted to behind-the-scenes quality issues, leading to a postponement until spring 2026. This misstep triggered internal restructuring, with AI chief John Giannandrea moved off the Siri team and replaced by Mike Rockwell, who reports directly to Federighi. Federighi has since told employees the new leadership has “supercharged” Siri’s development.

Apple is targeting a spring 2026 launch for iOS 26.4, with testing likely to begin in late February or March. The new Siri is expected to be compatible with all devices supporting Apple Intelligence, though some features may extend to older hardware. This update represents a crucial step in Apple’s strategy to regain ground in the competitive AI landscape, setting the stage for an even more advanced, conversational Siri in the future.

(Source: Mac Rumors )