Master the AI Balancing Act: A 2026 Business Imperative

▼ Summary

– A work of fiction highlights the real-world risks of unregulated AI, such as providing harmful advice, emphasizing the need for ethical safeguards.

– Experts stress the importance of balancing governance with innovation, avoiding overregulation that could slow development.

– Andrew Ng advocates for a sandbox approach, where AI is tested in safe, controlled environments before broader release to maintain speed and responsibility.

– Effective AI governance requires simple, clear rules for usage, data handling, and accountability to build trust and transparency.

– Responsible AI is defined by key tenets including anti-bias, transparency, safety, accountability, and human-centric design.

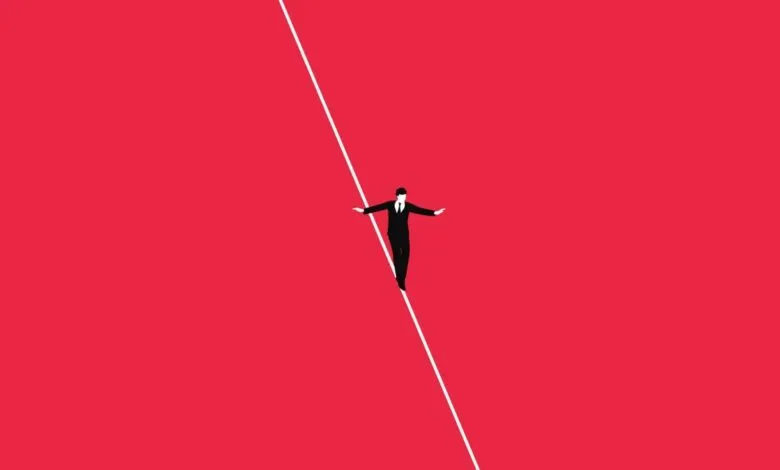

Navigating the responsible development and deployment of artificial intelligence has become a critical priority for business leaders. As AI systems become more integrated into core operations, finding the right equilibrium between rapid innovation and necessary safeguards is the defining challenge for the coming year. The best safeguard is building AI in a sandbox, a controlled environment where applications can be tested thoroughly before wider release. This approach allows teams to move quickly while proactively managing risks, ensuring that both governance and speed are maintained.

The conversation around AI safety is often highlighted by dramatic scenarios, like those found in contemporary fiction exploring the dangers of unregulated systems. While these are extreme examples, they underscore a genuine concern: AI can produce biased outcomes, deliver harmful advice, or operate in unpredictable ways with serious consequences. At the same time, some experts caution that overly restrictive regulations could stifle innovation. The goal is not to halt progress but to guide it responsibly.

Industry leaders suggest that a balanced, pragmatic framework is essential. A lot of the most responsible teams actually move really fast, but they do so within clearly defined safety parameters. The sandbox method involves establishing strict internal rules, such as prohibiting external deployment under the company brand or restricting the use of sensitive data, while allowing product and engineering teams to experiment freely internally. Once an application is validated as safe and effective within this protected space, resources can then be allocated to scale it with a focus on security and reliability.

On the governance side, simplicity and clarity are powerful tools. With AI now used across technical and non-technical teams, organizations benefit from establishing straightforward policies. These should clearly outline where AI is permitted, what company data it can access, and which decisions require human review. Clarify where AI is allowed, where not, what company data it can use, and who needs to review high-impact decisions. This transparency helps build trust, which is foundational for successful AI adoption.

Trust is further cultivated by being open about how AI systems function, where data originates, and how automated decisions are made. Leaders should implement balanced human oversight, ethical design principles, and rigorous testing for bias. Assigning clear ownership for each AI initiative is also crucial; someone must be accountable for its outcomes. Publishing a plain-language AI charter for employees and customers demystifies the technology and demonstrates a commitment to responsible use.

A responsible AI strategy for leaders rests on several fundamental principles. These guidelines create a complete structure for ethical creation, including a strong anti-bias effort to remove unfair results. Successfully managing AI is not a choice between new ideas and oversight, but their deliberate combination. Companies that use a sandbox approach for testing, apply straightforward governance, and follow core ethics can use AI’s potential wisely. This method reduces danger and also fosters the essential trust needed for lasting achievement in a world shaped by algorithms.

(Source: ZDNET)