Google unveils Ironwood TPU, details next-gen AI chips at TSMC 2nm

▼ Summary

– Google has made its seventh-generation TPU, Ironwood, generally available, positioning it as a high-performance inference chip with 4.6 petaFLOPS per chip and 42.5 exaFLOPS in a superpod configuration.

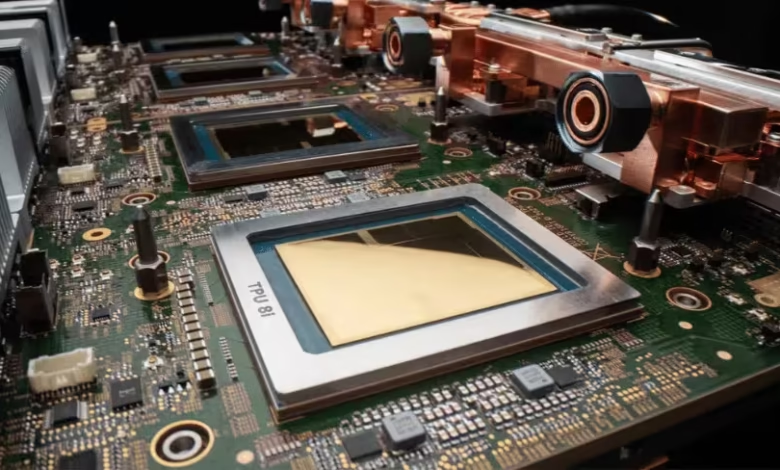

– The company previewed its eighth-generation TPU architecture, splitting it for the first time into a Broadcom-designed training chip (TPU 8t) and a MediaTek-designed inference chip (TPU 8i), both targeting TSMC’s 2nm process for late 2027.

– Google’s strategic emphasis on inference hardware addresses the ongoing operational cost of running AI models, as the company must double its AI serving capacity every six months to meet user demand.

– Anthropic is a key customer, with a deal expanded to 3.5 gigawatts of compute in 2027, making it the anchor customer for both the Ironwood and upcoming eighth-generation TPUs.

– Google is building a diversified custom chip supply chain with multiple partners, projecting massive TPU shipments and committing to nearly $185 billion in infrastructure spending for 2026 to support this strategy.

At Google Cloud Next 2026, the company announced the general availability of its seventh-generation Tensor Processing Unit, Ironwood, while also revealing its ambitious roadmap for the next generation. This dual announcement underscores a massive strategic investment in custom AI silicon, specifically engineered to dominate the critical and costly domain of AI inference. The new eighth-generation architecture will, for the first time, split into two distinct chips: the TPU 8t (Sunfish) for training, designed with Broadcom, and the TPU 8i (Zebrafish) for inference, designed with MediaTek. Both next-gen chips are slated for production on TSMC’s 2nm process and are targeting availability in late 2027.

Positioned as the cornerstone of Google’s infrastructure push, Ironwood is described as the first TPU built for the age of inference. Each chip delivers a peak of 4.6 petaFLOPS of FP8 compute, a fourfold leap over its Trillium predecessor, supported by 192GB of HBM3e memory. When scaled into a superpod configuration of 9,216 chips, the system achieves a staggering 42.5 exaFLOPS. This raw performance positions Ironwood as a direct competitor to Nvidia’s Blackwell B200 on paper, though each architecture has distinct advantages. Nvidia maintains an edge in single-device interconnect speed and supports FP4 precision for quantized models. Google’s strength lies in cluster-scale efficiency, boasting roughly twice the performance per watt of Trillium and a superpod architecture optimized for massive inference workloads.

This intense focus on inference hardware marks a pivotal strategic shift. While training a large model is a monumental but finite expense, inference costs are a perpetual operational burden that scales directly with user demand. Google reports it must double its AI serving capacity every six months to keep pace with services like Gemini, Search, and YouTube. Consequently, the company that masters cost-efficient inference stands to capture the immense margins currently flowing to general-purpose GPU suppliers. Ironwood is Google’s engineered solution, built for workloads like LLM inference and mixture-of-experts models. Its large memory capacity allows it to hold bigger model shards, reducing cross-chip communication, and its compute array is optimized for the dense linear algebra central to transformer models.

The preview of the eighth-generation TPUs represents the most significant architectural departure in Google’s chip history. By creating separate chips for training and inference, Google is abandoning the compromise of a one-size-fits-all design. TPU 8t (Sunfish) is a high-performance training accelerator featuring dual compute dies and enhanced memory bandwidth. In contrast, TPU 8i (Zebrafish) is a streamlined inference chip engineered to deliver a 20-30% lower cost than its training counterpart. This bifurcation formalizes Google’s multi-supplier strategy, leveraging Broadcom’s high-performance design expertise and MediaTek’s cost-optimization capabilities. This diversified supply chain provides strategic leverage and redundancy.

A key driver of this scale is anchor customer Anthropic. Its expanded partnership now encompasses a staggering 3.5 gigawatts of compute slated for 2027, making it the launch customer for both Ironwood and the eighth-generation chips. Anthropic’s commitment, alongside its exploration of its own custom silicon, highlights the critical importance of inference economics. The financial scale of this endeavor is monumental. Google has committed to $175-185 billion in infrastructure spending for 2026, with a significant portion dedicated to servers. Industry-wide, big tech AI infrastructure investment is approaching $700 billion this year.

This push is part of a broader industry trend where every major cloud provider is accelerating its custom silicon program. The custom AI chip market is growing nearly three times faster than the GPU segment, with projections suggesting it could capture 45% of the total market by 2028. Nvidia’s response has been to deepen its ecosystem lock-in through technologies like NVLink Fusion, betting on the enduring strength of its CUDA software platform. However, hyperscalers are building their own chips not necessarily to beat Nvidia on every technical metric, but because purpose-built silicon for their specific workloads and scale offers superior long-term economics.

While Google Cloud holds a smaller market share than AWS or Azure, it exited 2025 with the fastest growth rate and new profitability. The launch of Ironwood won’t instantly alter market positions, and Nvidia’s forthcoming Rubin architecture may reclaim certain technical advantages. Yet Google’s path is now clearly set: a relentless roadmap from Ironwood to dual-purpose 2nm chips, backed by historic capital expenditure, a multi-partner supply chain, and a marquee AI client scaling to multi-gigawatt demand. The race is fundamentally about capturing margin, and Google is betting that the winner will be the company that builds the most efficient silicon for its own needs.

(Source: The Next Web)