How to Send PCIe Over Fiber With SFP Modules

▼ Summary

– Commercial transceivers exist that let PCIe devices like GPUs operate outside a PC.

– These current solutions use an encapsulating protocol, such as Thunderbolt.

– They do not use a direct, straight PCIe connection.

– The article implies a contrast with a potential alternative using native PCIe.

– The focus is on the method of connection for external PCIe hardware.

The ability to connect high-performance components like GPUs and storage arrays outside a traditional computer chassis is transforming system design. While commercial solutions exist, they often rely on encapsulating protocols such as Thunderbolt, which can introduce latency and complexity. A more direct approach involves sending native PCI Express (PCIe) signals over fiber optic cables using standard SFP modules. This method promises lower latency and greater flexibility for specialized applications.

At its core, PCIe is a high-speed serial computer expansion bus standard. Transmitting these electrical signals over long distances requires conversion to an optical format. This is where SFP (Small Form-factor Pluggable) optical transceivers come into play. These compact, hot-swappable modules are commonly used in networking for Ethernet and Fibre Channel, but they can be adapted to carry PCIe lanes. The process involves using a PCIe retimer or redriver chip to condition the electrical signal before it is converted to light by the SFP module. A matching module at the far end converts the light back into an electrical PCIe signal the endpoint device can understand.

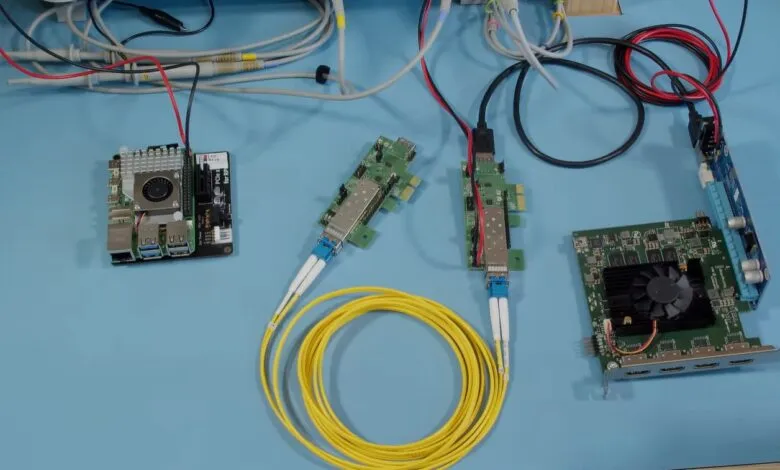

Implementing this requires careful hardware selection. Not all SFP modules are suitable, as they must support the specific data rate and encoding of the PCIe generation being used, such as PCIe 4.0 or 5.0. Engineers typically use FPGA (Field-Programmable Gate Array) development boards or specialized PCIe extension cards that provide the necessary retimers and SFP cages. These boards handle the critical signal integrity work, ensuring the data stream remains coherent across the fiber link. The physical medium is usually multimode fiber (MMF) with LC connectors, chosen for its cost-effectiveness over shorter reaches.

The primary advantage of this direct optical link is ultra-low latency. By avoiding protocol encapsulation and operating at the physical layer, the link behaves almost like a direct copper connection, just much longer. This is crucial for latency-sensitive workloads in high-performance computing (HPC) and financial trading systems. It also enables resource disaggregation, allowing a single, powerful accelerator like a GPU to be shared dynamically between multiple host servers as needed.

However, significant challenges remain. This technique operates at the physical layer, so it does not handle higher-level PCIe functions like link training and power management automatically. These must be managed by the host system and endpoint, potentially requiring custom driver software or BIOS-level adjustments. Furthermore, the maximum reliable distance is constrained by the chosen SFP technology and fiber type, though it readily surpasses the severe length limitations of copper PCIe cables.

For now, implementing PCIe over fiber via SFP is largely a domain for researchers and engineers with deep hardware expertise. It offers a glimpse into a future where system components are not bound by the chassis, enabling more modular and efficient data center architectures. As the underlying technology matures and becomes more standardized, it could move from niche applications into broader commercial adoption.

(Source: Hackaday)