Google’s Gemma 4: Open-Source AI Game Changer

▼ Summary

– Google has launched Gemma 4, a family of open-source AI models designed for diverse applications from high-performance computing to on-device use.

– It is released under the Apache 2.0 license, allowing modification and commercial use to foster collaboration and customization.

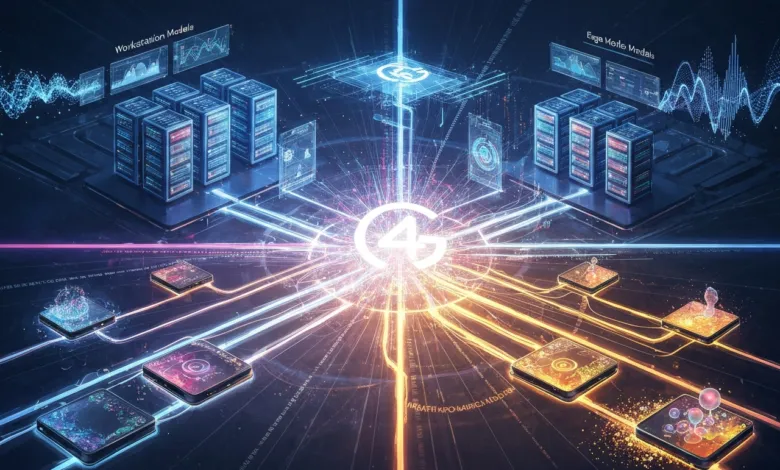

– The models come in two tiers: Workstation Models for demanding tasks with a 256K context window, and Edge Models for lightweight, on-device deployment with a 128K context window.

– Gemma 4 features multi-modal capabilities, natively processing and integrating text, vision, and audio inputs for unified workflows.

– It demonstrates advanced reasoning and strong benchmark performance, suitable for complex tasks across industries like healthcare and finance.

The launch of Google’s Gemma 4 marks a transformative moment for artificial intelligence, merging sophisticated performance with the freedom of open source accessibility. This new family of models, as noted by industry observers, is engineered to serve a vast spectrum of developer needs, from intensive computational workloads to efficient, on-device applications. Its defining characteristics include multi-modal integration for processing text, vision, and audio in a unified manner, alongside long chain-of-thought reasoning that supports complex problem-solving. With its two primary tiers, Workstation and Edge models, the platform delivers essential flexibility for professionals across sectors, whether they are managing intricate server-based tasks or optimizing for devices with limited resources.

Understanding the practical impact of Gemma 4 requires a look at its core specifications. The models feature expansive context windows of 256K and 128K, directly enhancing their utility for both enterprise and edge scenarios. Its release under the Apache 2.0 license is a foundational choice, actively encouraging widespread customization, commercial use, and collaborative innovation. Furthermore, capabilities like multi-image input support and advanced speech recognition unlock novel avenues for creating cohesive, intelligent workflows. This combination of power and permission provides a robust toolkit for projects of any scale.

A central pillar of Gemma 4’s philosophy is its open source licensing. The Apache 2.0 framework is deliberately permissive, granting developers the legal freedom to modify, fine-tune, and deploy these models for virtually any purpose. This stands in contrast to more restrictive models, effectively lowering barriers to entry. It empowers individual experimenters and large organizations alike to tailor the technology to their unique challenges, fostering a community-driven acceleration of AI progress. The license ensures that control remains with the implementer, making cutting-edge AI more democratically accessible.

To address diverse operational environments, Gemma 4 is structured around two distinct model tiers. This ensures the technology is equally viable for data-center-grade computations and compact, portable devices.

The Workstation Models, which include a 31B dense model and a 26B mixture-of-experts (MoE) variant, are built for heavy-duty tasks. Their substantial 256K context window makes them exceptionally well-suited for applications like advanced coding assistants, multi-user server platforms, and other long-context workflows that demand high precision.

Conversely, the Edge Models, such as the E2B and E4B, are optimized for efficiency. Featuring a 128K context window and designed for low latency, they enable sophisticated AI functionality directly on resource-constrained hardware like smartphones, IoT sensors, and single-board computers such as Raspberry Pis. This dual approach guarantees that Gemma 4 can power everything from global enterprise systems to personal consumer gadgets.

A major technical leap is found in Gemma 4’s multi-modal capabilities. By natively and seamlessly integrating text, vision, and audio processing, the models facilitate unified workflows that were previously more difficult to engineer. The enhanced vision encoder adeptly handles various aspect ratios and can process multiple images simultaneously, improving complex analysis tasks. The refined audio encoder delivers high accuracy in transcription, translation, and speech recognition, even in noisy or constrained edge environments. This convergence allows for the creation of intuitive applications that can, for instance, analyze a visual scene while interpreting an audio description, opening new frontiers for developer creativity.

The platform also shines in advanced reasoning. Through its long chain-of-thought processes, Gemma 4 can navigate nuanced, multi-step problems, producing coherent and contextually sound outputs. This is crucial for applications involving multi-turn dialogues, intricate decision-making, and logical problem-solving. The improved integration between the vision and audio encoders further strengthens this cross-modal reasoning, making the models highly effective for building intelligent virtual assistants, automated support systems, and advanced research tools that require a deep, contextual understanding of complex scenarios.

In terms of raw performance, Gemma 4 has demonstrated leading results on respected industry benchmarks like MMU Pro and SweetBench Pro. These evaluations test complex capabilities such as multi-turn agentic flows and function calling, areas where the models have shown remarkable precision and reliability. This consistent, top-tier performance across a battery of tests provides confidence for deploying Gemma 4 in both research initiatives and demanding production environments, from healthcare diagnostics to financial analytics.

Deployment is streamlined through availability on major platforms like Hugging Face and Google Cloud. For scalable, serverless implementations, it supports Cloud Run with G4 GPUs, offering efficient resource management. These options provide significant flexibility, allowing teams to integrate Gemma 4 into existing infrastructure with minimal friction, whether they prefer cloud-based services or on-premises solutions.

The applications for this technology are virtually limitless across industries. The models can be fine-tuned for specialized domains, enabling everything from bespoke data analytics in finance to multilingual customer service chatbots. With support for 140 pre-training languages and 35 fine-tuned languages, it is exceptionally powerful in global, multilingual contexts. For edge computing, it brings advanced AI to everyday life, enabling vision systems for robotics, voice-activated smart home interfaces, and real-time transcription services that enhance accessibility.

Ultimately, Gemma 4 represents a pivotal advancement, blending open collaboration with technical excellence. It provides developers, researchers, and businesses with a powerful and adaptable foundation to drive innovation. By offering both uncompromising performance for workstations and efficient intelligence for the edge, it equips the community to shape the next wave of practical, impactful artificial intelligence.

(Source: Geeky Gadgets)