5 Hidden Competitive Gates in ‘Rank and Display’

▼ Summary

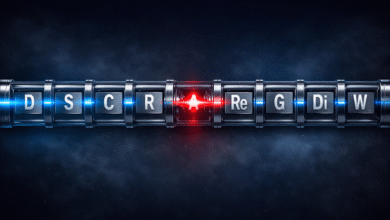

– The AI engine pipeline consists of two phases: the DSCRI infrastructure phase (discovery to indexing) with absolute tests, and the ARGDW competitive phase (annotation to won) with relative tests where content must outperform alternatives.

– Annotation is the critical gate where the system classifies content’s meaning; misclassification here invisibly degrades performance in all subsequent competitive gates.

– Success requires presence across three knowledge graphs: the entity graph (structured facts), document graph (content chunks), and concept graph (inferred relationships), as multi-graph presence creates a compounding confidence advantage.

– The competitive phase progressively narrows through recruitment, grounding, and display, culminating in the “won” gate, which is a zero-sum moment where one brand secures the conversion.

– Optimizing for AI visibility requires moving beyond traditional SEO to address all five competitive gates (ARGDW), with a particular focus on improving annotation accuracy and entity clarity to avoid misclassification.

For content strategists, the journey from creation to conversion is governed by a critical, often misunderstood pipeline. This process moves through five distinct competitive gates that determine whether AI systems recruit, trust, and ultimately recommend your content to potential customers. While often simplified as “rank and display,” these stages represent a Darwinian funnel where your material must not only pass technical checks but also outperform alternatives at every turn.

The initial infrastructure phase, DSCRI, involves absolute tests like discovery and indexing. Here, the system either has your content or it doesn’t. However, the real battle begins with the competitive phase: ARGDW. This stands for annotation, recruitment, grounding, display, and won. In this phase, every test is relative; your content must be better than the competition’s. The pivotal shift between these phases is the “competitive turn,” where the algorithm’s question changes from “Do I have this?” to “Is this better than the alternatives?”

A fundamental advantage lies in achieving multi-graph presence. Modern systems operate across three knowledge structures: the entity graph (structured facts), the document graph (web content), and the concept graph (inferred relationships). Brands that successfully appear across all three graphs build a compounding confidence advantage. For instance, a competitor with only document graph presence (a search ranking) will often lose to a brand that also has entity graph presence (a knowledge panel), as the latter provides low-fuzz, verifiable data that algorithms trust more.

Annotation is arguably the most crucial gate. This is where the system interprets your indexed content, deciding what it means across more than 24 dimensions like core identity, intent, and verifiability. If annotation fails, if the system misclassifies your entity or topic, every downstream decision inherits that error invisibly. Your page can be perfectly indexed yet completely misfiled, leading to poor performance in AI responses. Key performance indicators include a messy brand SERP, absence from relevant “vs.” comparisons, and being overlooked in “how-to” answers despite having the right content.

Following annotation, recruitment is the universal checkpoint where annotated content competes for a place in the system’s active knowledge structures. Each of the three graphs has its own recruitment criteria and update speed. Search results may refresh daily, knowledge graphs monthly, and LLM training data every few months. A coordinated strategy to earn a place in all three graphs is essential for sustained visibility.

Grounding is where the system, particularly an LLM, checks the veracity of recruited content for a specific query in real time. The competitive implication is clear: a brand with strong entity graph presence offers a cheap, fast, low-fuzz verification path. A brand without it forces the system onto a slower, high-fuzz path of document retrieval and interpretation, which breeds ambiguity and lowers confidence.

Finally, display determines how and where the system presents your content. It involves three simultaneous decisions: format, placement, and prominence. This is governed by the principles of Understandability, Credibility, and Deliverability (UCD), which activate differently depending on the user’s funnel stage. A common failure here is the “framing gap,” where the system’s understanding of your brand does not match your intended positioning.

All these gates culminate in “won,” the zero-sum moment where one brand secures the conversion and all others lose. This resolves in three escalating ways: the imperfect click (user chooses from options), the perfect click (AI recommends one brand), and the agential click (an AI agent autonomously completes a transaction on the user’s behalf).

Competitive intensity escalates at each gate, creating a progressive narrowing. Failures in the ARGDW phase require competitive fixes, not just technical ones. To win, brands must ensure accurate annotation, build multi-graph presence, enable low-fuzz grounding, master contextual display, and prepare for agent-driven transactions. Understanding this complete pipeline transforms visibility from a guessing game into a manageable, strategic endeavor.

(Source: Search Engine Land)